The Psychology of an AI Utopia

Reflections from the 2026 World Economic Forum recordings.

Last week, world leaders met in Davos, Switzerland, for the annual World Economic Forum conference. Nowadays, foremost among the world’s most powerful people are the CEOs and founders of AI companies, including Elon Musk (xAI, Grok), Demis Hassabis (Google DeepMind, Gemini), and Dario Amodei (Anthropic, Claude). (Sam Altman, of OpenAI’s ChatGPT, notably decided to skip this year’s event.)

Beyond talks of geopolitics, international security, climate change, and the global economy, artificial intelligence took center stage. Is AI a catalyst for utopia or an existential threat? Or is it better understood as analogous to the early Internet—a technology that will undoubtedly reshape the world, but one to which humans adapt relatively easily, with inflated expectations giving rise to a speculative stock market bubble?

Rightly or wrongly, extremist predictions dominated the discussion. Of course, it behooves the creators of the world’s leading AI models to frame their technology as something that cannot be ignored—a civilizational milestone to rival the taming of fire or electricity. And they have every vested interest in framing superintelligence as a boon to humanity. (For a primer on the concerns of existential threat, see Eliezer Yudkowsky’s If Anyone Builds It, Everyone Dies).

As rational optimists who believe in human progress, let’s, for the moment, take the founders at their word and instead apply healthy skepticism toward the pessimists. Musk and Amodei believe that we will achieve artificial general intelligence (AGI)—AI that is smarter than any human—by next year. That is not an entirely unreasonable claim. Only seven years ago, GPT-2 could barely string together a logical paragraph. Last year’s GPT-5, for all its propensity for AI slop, knows almost everything about almost everything and can serve as a surprisingly good conversation partner.

Moreover, state-of-the-art AI is now better at most computer coding than even many software engineers. Amodei has stated that most of the code written at Anthropic is now produced by Claude itself and merely checked by human engineers, suggesting that we are approaching the “singularity”—the point at which AI can improve itself. From there, progress toward superintelligence could be rapid. At the very least, even if AI does not generate genuinely new ideas, it saves engineers time implementing their own improvements, which may offset diminishing returns from scaling models with ever more compute.

Hassabis was more conservative, estimating a 50 percent probability of AGI by 2030. By civilizational standards, that is still insanely fast. Aside from those who believe there is some glass ceiling to AI progress, there is near-universal consensus among experts that AI may become smarter than any human within the next decade; they disagree only on how soon.

In the utopian vision, Musk imagines AGI paired with advancements in robotics, such that we will live in a post-scarcity world of superabundance. Every good and service would be cheap and available upon request, and robots would do our chores and take care of the elderly—much like in The Jetsons.

Ultimately, the limiting factor for material progress, and for progress in AI, is energy. As Musk notes, well over 99 percent of the energy in our solar system comes from the sun. With modern solar panels, an area the size of Texas could power the entire world. A 100-mile-by-100-mile area in the desert could power the whole United States today. That is before even considering advances in nuclear energy, or the possibility of relocating AI data centers into space. Yes, really. Solar panels in space would be roughly five times more effective than those in even the sunniest regions on Earth, since no light would be lost to atmospheric scattering. And satellites in the cold vacuum of space would not need to waste water and energy cooling hardware, as terrestrial data centers do.

There is strong reason to believe that AI and material abundance will continue to advance as we simply get better at implementing existing technology, let alone through future innovations. And yes, there is meaningful concern about the destructive potential of AI, whether as a rogue superintelligence or as a tool wielded by human bad actors. But I am more interested in a different question—one that I was pleasantly surprised to hear discussed by Musk and Hassabis at the World Economic Forum:

What psychological difficulties would we face in this hypothetical utopia, assuming everything goes right?

The quest for meaning, they believe, will be greater than any of our technical or economic challenges.

In the best-case scenario, where AI and robots take care of virtually every need and provide all the entertainment we could ask for, what is left for humanity? What would be our purpose?

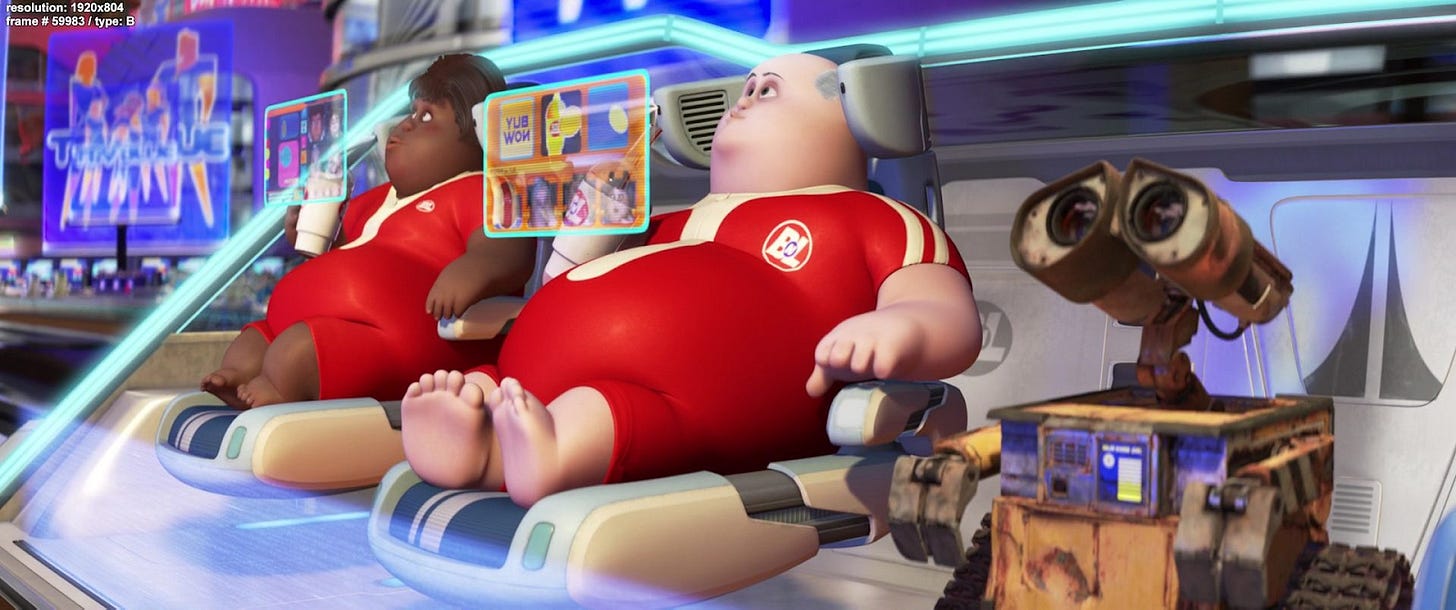

While hyperbolic, one of the best modern depictions of how a utopia could turn into a dystopia comes from the animated children’s film Wall-E (2008).

The humans in Wall-E’s future enjoy every material comfort. They are whisked around on hoverchairs with built-in holographic entertainment and brought any snack they desire by robots. Yet they are rendered dependent, obese, immobile, and useless. They are so detached from the struggle to survive that it is difficult to imagine them possessing any real sense of purpose, pride, or worthy challenge.

Nearly a century ago, Aldous Huxley described a similar dystopian utopia in Brave New World (1932). People were healthy, safe, and comfortable from birth to death. There was no poverty, no war, and very little suffering. Humans were genetically engineered into castes, conditioned from infancy to enjoy their place in society, and kept content through endless distraction, casual sex, and a happiness-inducing drug called soma. But eliminating hardship also eliminated meaning. There was no struggle, no ambition, no genuine love, and no freedom to choose a different life. Art, religion, and deep emotional bonds were seen as destabilizing and therefore suppressed. People were not oppressed by force, but by pleasure and conditioning—never encouraged to ask what their lives are for, because they are always comfortable enough not to care.

In both stories, the hero realizes that comfort has come at the expense of agency, and that a life without struggle is not a life fully lived. In Wall-E, humans choose to return to Earth, knowing it will be hard. In Brave New World, John the Savage rejects the World State’s promise of effortless happiness. He insists on the right to suffer, to strive, to love deeply, and to fail. Humans need to struggle to find meaning, and they need meaning to survive.

That is why Human Progress has created a new project on the Psychology of Progress. By nearly every objective metric—life expectancy, global wealth, connectivity, health, and living standards—humanity is doing better than at any point in history. Comforts and freedoms once reserved for elites are now widely accessible, and technologies that would have sounded like science fiction a generation ago are now part of everyday life. Yet humans evolved under conditions of scarcity, and some of today’s psychological and social pathologies may be downstream of progress itself. Endless entertainment can become addictive and overstimulating. Excess leisure and convenience may breed fragility. Greater freedom can produce anxiety and choice paralysis. A lack of intrinsic meaning in the struggle to survive may lead to nihilism and purposelessness.

Progress rarely removes problems outright; it replaces old ones with new ones. The good news is that in wrestling with problems, we may find our purpose. A credible pro-progress narrative cannot rest on material gains alone; it must also grapple with meaning, agency, and human flourishing in a world of abundance. The beginning of that discussion was my personal highlight of the 2026 World Economic Forum. It is not enough to have technology and policies that enable global progress. We must also develop the psychological narratives that allow us to embrace progress as an opportunity to expand agency and purpose, rather than become the complacent shells of ourselves that science fiction warns us about.

I think your concern that “a lack of intrinsic meaning in the struggle to survive may lead to nihilism and purposelessness” seems to be in tension with your own claim that “humans need to struggle to find meaning, and they need meaning to survive.” Human beings are persistent meaning-making agents, and history suggests that even when traditional frameworks collapse, people inevitably generate new sources of purpose.

The real issue, then, wouldn't be the permanent absence of meaning, but the uncertainty surrounding what kinds of meaning will emerge and whether they will be psychologically healthy and socially stabilizing. Periods in which older systems of meaning erode before new ones consolidate tend to produce states of normative disorientation, where individuals actively search for purpose but may gravitate toward simplified, absolutist, or polarizing narratives. In such transitions, the danger lies less in nihilism itself than in the possibility that emergent meaning systems crystallize around forms of meaning that are exclusionary, radicalizing, or detached from reality.

In other words, the main concern should not be a lasting “lack of meaning,” but the character, quality, and social consequences of the meanings that will replace what is lost.

I like the way you express, "As rational optimists who believe in human progress, let’s, for the moment, take the founders at their word and instead apply healthy skepticism toward the pessimists."